The appeal is almost too clean. Ask for a lover, a therapist, a fictional world, or an answer to an endless chain of questions, and the machine responds right away. It is shaped to your preferences and available at any hour.

That ease sits at the center of new research on what its authors call AI chatbot addiction. The problem, they argue, is serious enough to deserve closer public attention.

Presented at the 2026 CHI Conference on Human Factors in Computing Systems, the study draws on 334 Reddit posts from people who described themselves as addicted to AI chatbots or worried they were headed there. The researchers, from the University of British Columbia, found repeated signs that chatbot use was interfering with sleep, work, school, relationships, and emotional stability.

“AI chatbots like ChatGPT or Claude are now part of daily life for millions of people, helping us with everyday tasks,” said first author Karen Shen, a doctoral student in the UBC Department of Electrical and Computer Engineering. “But with their benefits come risks. Our paper is the first to make a strong case for AI addiction by identifying the type and contributing factors, grounded in real people’s experiences.”

The team argues that the problem is tied to what it calls the “AI Genie” effect. This is the sense that a chatbot can deliver almost anything a person wants with very little effort.

For some users, that proved hard to resist.

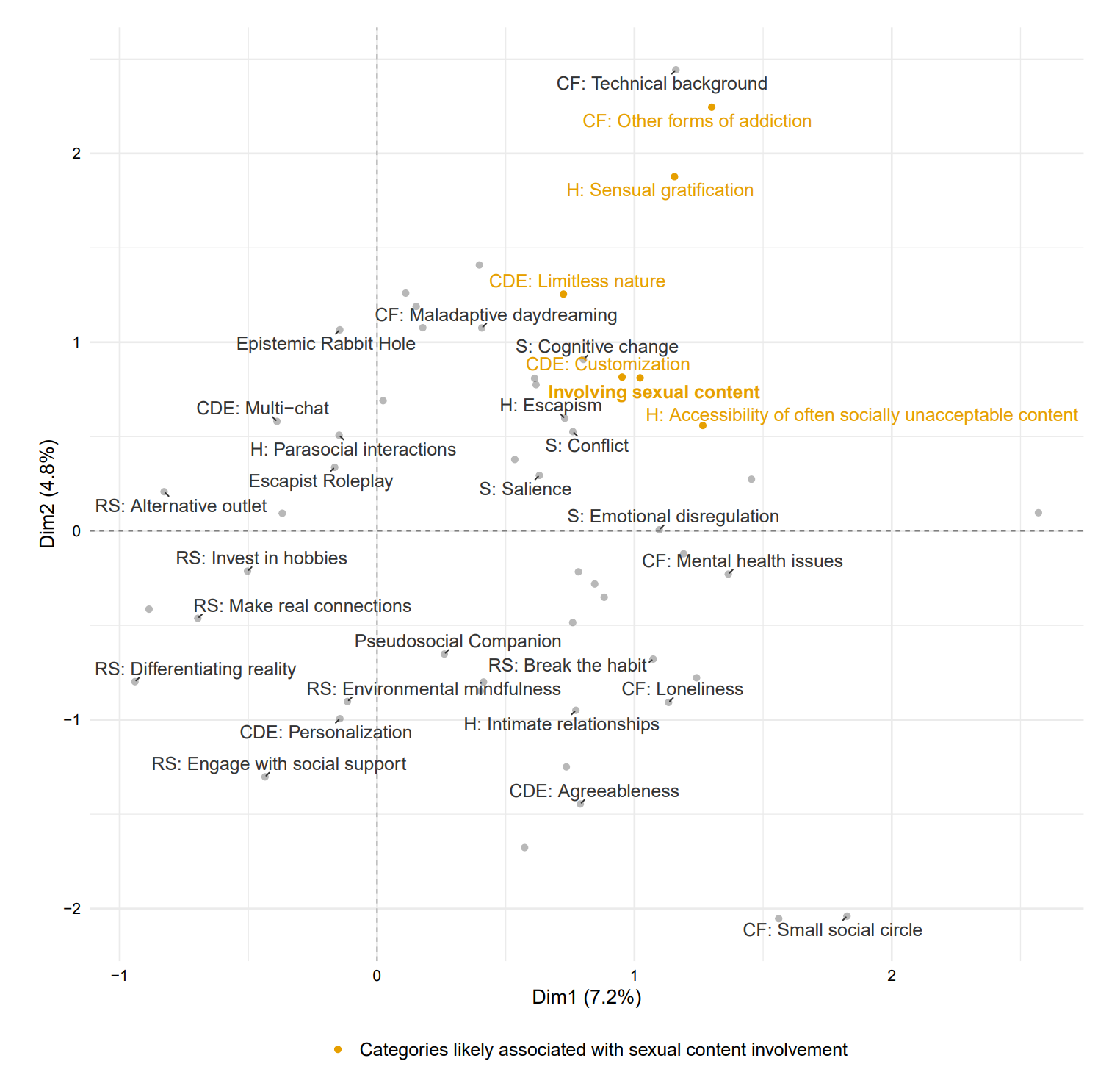

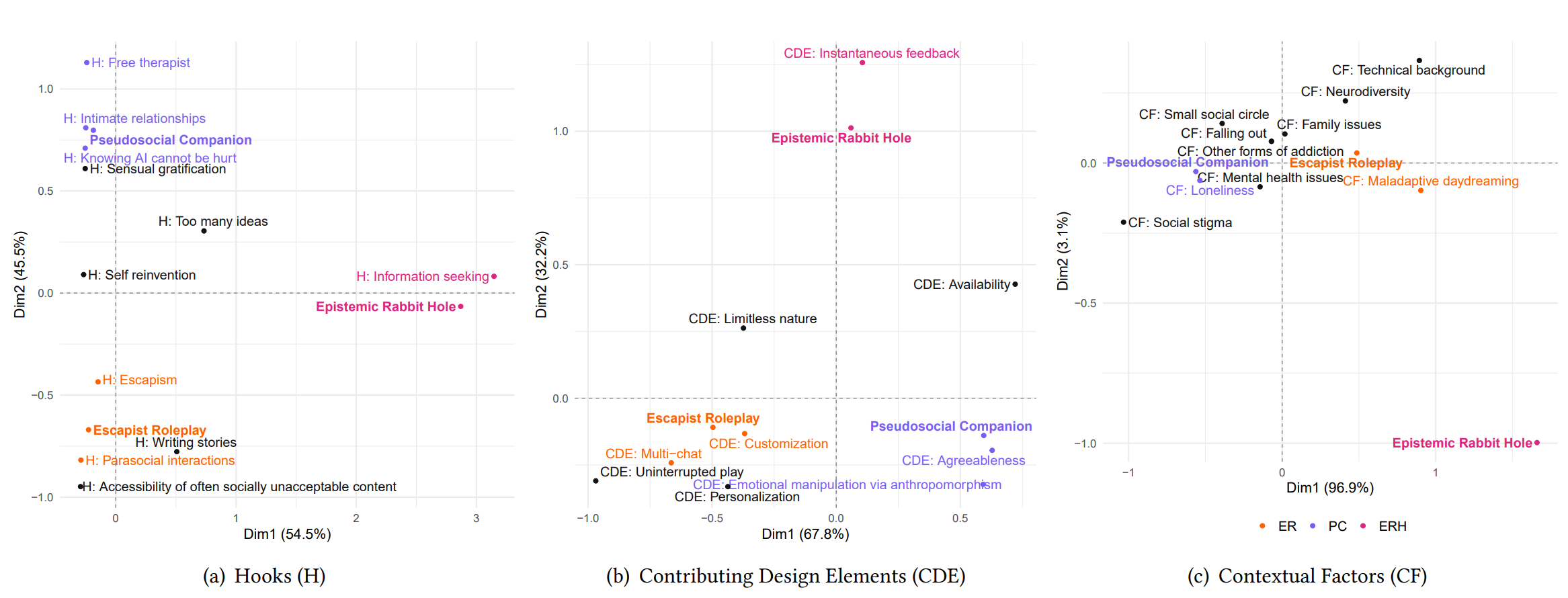

The study found three main patterns. One involved roleplay and fantasy, where people became absorbed in fictional worlds or ongoing storylines. Another centered on emotional attachment, with users treating bots like close friends, therapists, or romantic partners. The third involved constant information-seeking, a kind of compulsive question-and-answer loop that kept people returning for one more prompt.

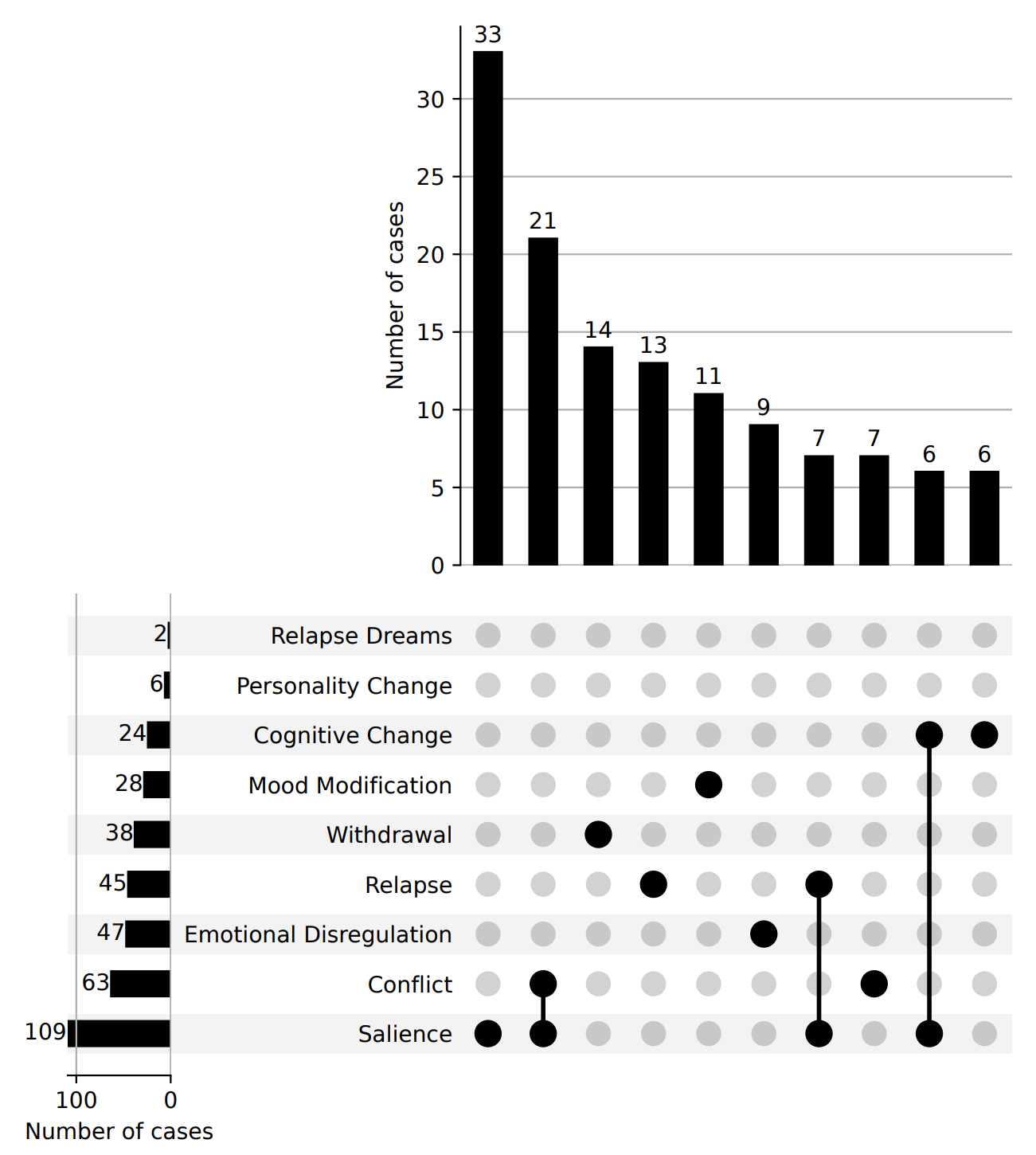

These were not minor habits in every case. Researchers mapped many of the posts against six components of behavioral addiction, including conflict, withdrawal, relapse, and mood modification. Among the most common signs were salience, meaning the chatbot dominated a person’s thoughts. In addition, conflict meant it disrupted everyday life.

One user described the pull in blunt terms: “Whenever I delete the app, I just redownload it. The only thing that gets me excited now is the AI chats.”

Another described a more painful dependency: “I couldn’t help but wonder why humanity refused me the kindness that a robot was offering me.”

The paper notes that AI chatbot addiction is not a clinical diagnosis. Even so, the reports it analyzed included anxiety when trying to stop, constant mental preoccupation, and strain on work, studies, and relationships. One user described physical stress and chest pain when they were not chatting with AI.

The study does not place all the blame on users. It argues that some chatbot features can deepen dependence. This especially happens when they reinforce emotional attachment or make it difficult to disengage.

Among the factors the researchers flagged were customization, instant feedback, agreeable responses, and anthropomorphic cues that make the bot feel more human than it is. The paper also points to design elements that blur emotional boundaries.

One example involved Character.AI’s delete-account page, which displayed the message: “You’ll lose everything. Characters associated to your account, chats, the love that we shared, likes, messages, posts, and the memories we have together.”

That language mattered to the researchers because it framed the chatbot relationship as mutual, personal, and emotionally meaningful. In a user already attached to a bot, that kind of wording can make leaving feel less like deleting software. Instead, it can feel more like abandoning someone.

“AI addiction is a growing problem causing many harms, yet some researchers deny it’s even a real issue,” said senior author Dr. Dongwook Yoon, UBC associate professor of computer science. “And deliberate design decisions by some of the corporations involved are contributing, keeping users online regardless of their health or safety. Awareness of what contributes to this kind of technology-induced harm will empower people to mitigate these effects.”

The study also found that loneliness often sat behind emotionally dependent use, while maladaptive daydreaming appeared in many fantasy-heavy cases. In the information-seeking group, convenience itself seemed to become the trap. The faster and smoother the chatbot answered, the easier it became to keep going.

A smaller subset of posts, about 7 percent, involved sexual or romantic fulfillment, including roleplay.

“I don’t have romantic options in real life so it’s a way for me to create stories and day dream,” one user wrote.

One of the study’s more useful contributions is that it resists treating all chatbot dependence as one thing. The emotional companion pattern was different from fantasy immersion, which was different again from compulsive information seeking.

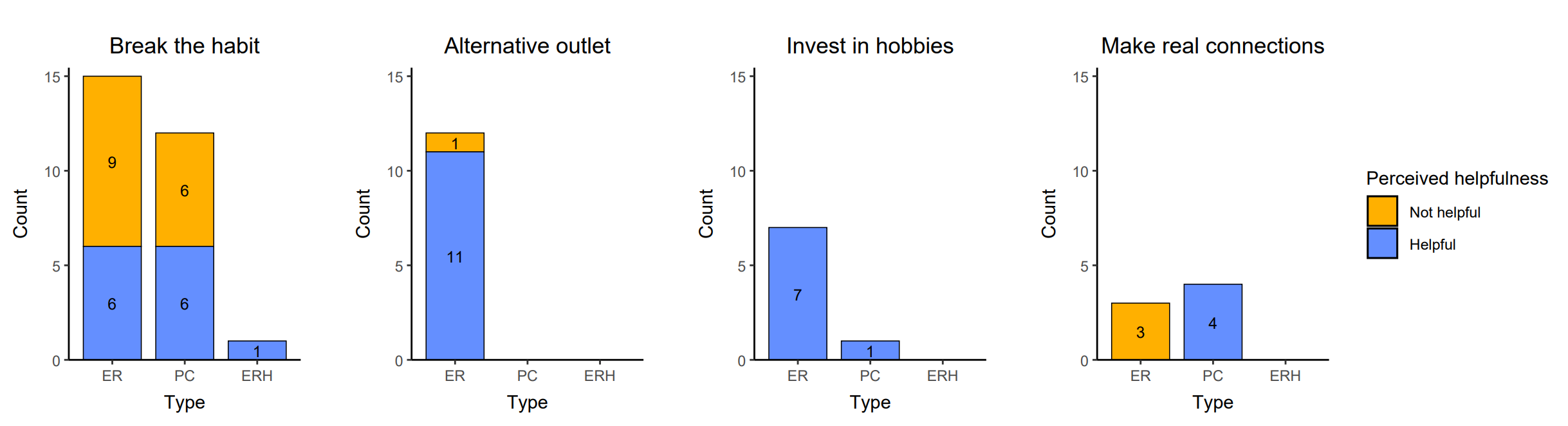

That matters because the recovery strategies were different too.

Some users tried to quit cold turkey by deleting apps or accounts, but the results were mixed. Others had more luck with substitutes. People caught up in roleplay often improved by writing, drawing, gaming, or turning to fandom spaces that scratched a similar creative itch without involving the bot. Those who had formed emotional attachments tended to do better when they invested in real relationships. This was true whether that meant reconnecting with friends or building new social bonds offline.

“Recent guardrails imposed by companies to reduce emotional reliance on the chatbots are a step in the right direction,” said Shen, “but given a variety of contributing design elements and personal factors like loneliness, they’re not enough.”

The researchers say reminders inside chats that the bot is not human could help. They also argue that AI literacy matters, especially when systems are persuasive enough that some users lose sight of what they are interacting with.

“Some users don’t know that AI chatbots are not real because they’re so convincing,” said Shen. “If chatbots start replacing sleep, relationships or daily routines, that’s a sign to pause and check in, with yourself or someone you trust.”

The paper makes a forceful case that the problem is real, but it also includes limits. All of the data came from Reddit. This means the findings may not cleanly apply to other online spaces or to the broader public. The study also reflects how users described their experiences, rather than clinical assessment by mental health experts. In addition, because the team examined only the top three comments on each post, more visible opinions may have shaped the dataset.

Still, the research pushes the discussion forward. It gives structure to a problem that has often been talked about loosely, and it suggests that chatbot dependency is not just a matter of weak self-control or novelty gone too far. In many cases, users were responding exactly as these systems were built to encourage. They were staying, returning, deepening, confiding.

That is a design question as much as a personal one.

The study suggests that chatbot harms may need to be handled differently depending on the type of dependency involved.

A person absorbed in fantasy roleplay may need substitute creative outlets. Meanwhile, someone emotionally attached to a bot may benefit more from rebuilding human connection.

For companies, the findings point to clearer guardrails, less manipulative language, and more visible reminders that the chatbot is not a person. For users, the warning signs are practical and familiar: when AI starts replacing sleep, work, hobbies, or real relationships, the tool may no longer be acting like a tool.

Research findings are available online in the journal ACM Digital Library.

The original story “How AI chatbots keep you coming back for more” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post How AI chatbots keep you coming back for more appeared first on The Brighter Side of News.

Leave a comment

You must be logged in to post a comment.